Many of you have heard about the need for a "sitemap" for a website, but not everyone fully understands the importance of its presence on the website. In this article, we will review the Sitemap.xml file, as well as describe the options for generating it for various website types.

Structure of the article:

- Sitemap purpose

- Sitemap format description

- Character masking

- Sitemap partitioning

- Sitemap.xml location and indexing

- Using non-latin addresses

- Questions on the need for a sitemap

- Overview of options for generating Sitemap.xml

Sitemap.xml purpose

A sitemap is an XML or text format (TXT) file containing all the website URLs (links to pages or images) and it informs search engines about new pages on your resource. Upon crawling all the URLs in the sitemap, the search engine will go to all the relevant pages of your website.

Of course, search engines will index your website without a sitemap, and often will do it no less good, however, in some cases, search engines may have difficulty with indexing pages. The main reasons for non-indexing can be such factors as:

- the website has deep Crawl Depth of pages (typical for large web resources)

- the website has pages without navigation links (pages cannot be visited through the internal website navigation)

- the website has dynamic URLs

Due to the above options, the robot may never reach such pages, since in the first case, because of deep nesting, it simply does not reach the final URL, having exhausted the website’s crawling limits, and in the second, it will not physically see them, since it will not be able to follow the links on the website (for example, it may be the case when a link exists, but defined by means of JavaScript, CSS style, or simply encrypted, which is why the search robot will not see it in the webpage source code).

However, having information about the presence of the Sitemap.xml file, the search robot will periodically crawl it and index new webpages in the order you require, with the required priority and just for the pages most important to you at the moment.

Sitemap format description

A sitemap can be of two types: text format (TXT) and XML format.

Text format is a simple UTF-8 encoding text file and contains the website URL as sets of lines (each link in a new line). Text sitemap example:

https://www.site.com/page-1.html https://www.site.com/page-2.html

XML format is an extended text format and provides search engine bots with additional information. Sitemap.xml file example:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>http://www.site.com/</loc>

<lastmod>2018-10-03</lastmod>

<changefreq>monthly</changefreq>

<priority>1.0</priority>

</url>

<url>

<loc>http://www.site.com/page-1.html</loc>

<lastmod>2018-10-03</lastmod>

<changefreq>monthly</changefreq>

<priority>0.9</priority>

</url>

<url>

<loc>http://www.site.com/page-2.html</loc>

<lastmod>2018-10-03</lastmod>

<changefreq>monthly</changefreq>

<priority>0.9</priority>

</url>

...

<url>

<loc>http://www.site.com/page-N.html</loc>

<lastmod>2018-10-03</lastmod>

<changefreq>monthly</changefreq>

<priority>0.9</priority>

</url>

</urlset>

Description of XML elements to pay attention to:

- url (required) – contains all the information about a specific URL

- loc (required) – page URL. URL with parameters requires masking

- lastmod – date and time of the last page change in the Datetime format. If necessary, you may omit the time segment and use the YYYY-MM-DD format

- changefreq – specifying the recommended page change frequency. Available values: always, hourly, daily, weekly, monthly, yearly, never

- priority – page value relative to other website URLs. Valid range is 0.0 to 1.0. The more important the page, the higher the priority. The main page always has a priority of 1, the default priority is 0.5

Note: It is worth mentioning that the site map content is only a recommendation for search robots to crawl pages (if you set the frequency of crawling pages once a week, the robot can crawl them more often, and vice versa, if you set the frequency of crawling hourly, it does not mean that search engines will index the page every hour).

Other XML sitemap formats:

- Image sitemap file

- A separate sitemap for images will be useful if the images are not directly accessible to the bot (for example, if they are loaded using JavaScript). However, often, for this you can use a regular Sitemap.xml and insert links to images along with common URLs there. Learn more about sitemap for images in Google Help

- News sitemap file

- It is used to quickly index the news materials of your website, your resource shall be included in the Google Newsdirectory. Sitemap requirements: must contain no more than 1,000 URLs, must contain URLs for news published over the past two days. Learn more about sitemap for news in Google Help

Masking

Masking in Sitemap.xml is for URLs and is intended to interpret regular characters in their pseudo-codes in HTML format:

- Ampersand: & -> &

- Single quotes: '-> '

- Double quotes: " -> "

- More than: > -> >

- Less than: < -> <

Thus, a usual URL with parameters and non-masked special characters according to XML standards will be invalid, for example:

Standard URL of the page (not valid)

https://www.site.com/index.php?page=news&date=22071981

Valid URL in Sitemap with masking (character "&" is replaced by "&")

<loc>https://www.site.com/index.php?page=news&date=22071981</loc>

Characters in non-ASCI URLs also require masking in addition to &. Example for URL address:

http://www.site.com/contacts.html

The same URL, using masking, to be inserted in a Sitemap file:

http://www.site.com/%D0%BA%D0%BE%D0%BD%D1%82%D0%B0%D0%BA%D1%82%D1%8B.html

Sitemap partitioning

The Sitemap.xml file has a limited amount of URLs therein, and limited file size itself. Each Sitemap.xml file should contain no more than 50,000 URLs, and its size for Google should not exceed 50 MB (if necessary, you can pack the file into the "gzip" format, but, nevertheless, in the unpacked form, it also should not exceed 50 megabytes), so if you need to specify more than 50,000 URLs, you should create several Sitemaps.

Thus, due to the a Sitemap partitioning option, you can bypass all these restrictions and easily generate a Sitemap for tens and hundreds of thousands of pages.

The file can be partitioned by creating the main index file Sitemap.xml containing links to child files which in turn are standard Sitemap.xml files and contain a list of final URLs of your website. To specify links to child sitemaps, the main index file uses the same <loc> tag, framed with <sitemap> tag, and containing links to child Sitemap.xml files (child sitemaps can be named arbitrarily).

Index XML sitemap file example:

<?xml version="1.0" encoding="UTF-8"?>

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>http://www.site.com/sitemap-1.xml</loc>

<lastmod>2018-09-25T21:38:17+00:00</lastmod>

</sitemap>

<sitemap>

<loc>http://www.site.com/sitemap-2.xml</loc>

<lastmod>2018-09-21</lastmod>

</sitemap>

</sitemapindex>

The format of child XML sitemap files is similar to the standard Sitemap.xml.

Sitemap.xml location and indexing

The standard sitemap location is the website root, for example:

https://www.site.com/sitemap.xml

When placing "sitemap.xml", take into account the fact that the set of URLs that can be included in a Sitemap is determined by location on the website, that is, when the sitemap is at http://site.com/news/sitemap.xml it can only include URLs that begin with http://site.com/news/, but should not include addresses that begin with http://site.com/pages/. Examples of valid URLs for http://site.com/news/sitemap.xml:

http://site.com/news/25092018/ http://site.com/news/news-all/

Invalid URLs in the http://site.com/news/sitemap.xml file include:

http://site.com/pages/page-1/ http://site.com/images/1x1.gif http://site.com/contacts/

Thus, in order to avoid problems with incorrect indexing, it is strongly recommended to place Sitemap.xml in the website root directory.

Sitemap indexing

By default, search robots scan the website root directory, so over time they will find your sitemap and follow all its links.

However, to speed up visiting a Sitemap by search bots, follow a few steps:

- Place the sitemap link in the "robots.txt"file

- Add a link to "sitemap.xml" to Google webmaster panel

Sitemap link in "robots.txt"

User-agent: * ... Sitemap: https://site.com/sitemap.xml

Adding a sitemap to Google webmaster panel

Using non-latin addresses

To correctly interpret URLs by various search engines, it is recommended to encode all non-latin URLs (Cyrillic, as sample) into Punycode format and use masking for non-latin page addresses.

That is, instead of the address:

http://www.ёэлектроника.рф/каталог/лампы/

You must use a coded URL:

http://www.xn--80ajjhbcqhrt1jzb.xn--p1a/%D0%BA%D0%B0%D1%82%D0%B0%D0%BB%D0%BE%D0%B3/

Questions on the need for a sitemap

There is no doubt that availability of a site map is desirable on any website. But, on the one hand, there is no urgent need for a sitemap, since eventually the search robot will go to your website and will crawl all pages accessible to it via links. On the other hand, for websites with frequent content updates and where the order and priority of crawling pages (media, news agencies, etc.) is important, this file will be vital, because with it they will be able to tell search engines what pages to index into first of all, and which later.

Therefore, a fair question arises: is Sitemap.xml so necessary specifically for your website? Let us have a closer look.

Of course, this file will be relevant for websites with a size from 1,000 pages, as well as for those websites where page size is growing rapidly and where content requires frequent reindexing, therefore due to this file the search system will always have an up-to-date list of your website pages at hand and using it, will promptly index all changes. Thus, this file is relevant for websites where content changes frequently and in large volumes (50 pages were added, 40 were deleted, 175 were updated, etc.):

- Media, news portals

- Internet portals

- Product catalogs, aggregators

- Online stores

- forums, feedback websites, question-answer websites

Such websites require this file in the first place, since it affects the relevance of information provided in search engine results.

Example of indexing using a sitemap when adding a large number of pages to a website:

Note: Sitemap files in this case must be generated on the server side. Generating a sitemap online using services or PC software is pointless due to the low speed and laboriousness on uploading data to the server (for large portals, catalogs and online stores, these files should be updated almost every hour (for example, updating prices in an online store)).

The second category of websites also requiring this file includes websites from 100 to 1,000 pages and representing business services, as well as informational pages:

- Websites selling goods and services

- Company and representative office websites

- Blogs

Influx of new pages on such websites is usually smooth, pages and sections are deleted even less often. Therefore, by itself, such websites should have Sitemap XML files, however, in this case, this file is more important for the initial website indexing (so that per single crawl of the file, the search robot learns about all website pages and promptly indexes them). Further, in view of their single adding, new pages can be sent for reindexing even through the Google webmaster panel and thus maintain the relevance of pages for search engines. Sitemap files for such websites can be generated by special programs and services described below.

The third website category is websites with up to 100 pages. They include:

- Landing pages (one-page websites for the sale of a particular product or service)

- Promotional websites (for example, websites of cottage settlements)

- "Business card" websites

- Home pages

All these websites often contain a few pages about a particular single service, product, event. Sitemap is not vital for such websites. Since their content is rarely updated and new pages are added with low intensity, search robots do not often crawl such websites because of their small number of pages, so for these websites, both the use of Sitemap.xml and its predecessor HTML sitemap are suitable – is a regular HTML page stylized for the website design containing all links to the internal pages of the website in a hierarchical form (usually in a tree form). Thus, a search robot, having visited this page, can crawl all website pages and index them or update information about them. Example of such a page:

Note: Currently, such pages has ceased to be relevant in view of the transition to the XML format, which does not need to be manually created, but can be generated by special programs or services (examples are below).

Thus, from everything mentioned above we can draw a simple conclusion that the larger the pages of your website and the more often it is updated, the more urgent is the need for a Sitemap.xml file, which in the ideal case should be generated on the server automatically, without human intervention.

Important!

A sitemap should include only the relevant website pages subject to indexing and issuing the server response code 200. All other official, technical pages or pages prohibited for indexation should not be included in the site map.

Options for generating Sitemap.xml

There are several methods of generation listed below:

1. Site map generation with online generator (keep in mind that often these generators are paid)

There are enough online services to generate a site map, but they have some limitations:

- Usually such services can generate not more than 500 pages for free

- For large websites (from 5,000 pages), generation may take a long time

- Sitemap generation for large portals may even result in an error due to lack of resources of the server hosting the service

Example of online generator XML-Sitemaps.com:

Note: This method has a disadvantage that each time you update the website, you must manually generate a sitemap and upload it to the server.

2. Automatic generation of Sitemap.xml using CMS (for example, Bitrix, WordPress, Opencart and other website management systems have such a feature)

The most priority option to customize the frequency of updating a Sitemap by means of a website management system and saves webmasters from manually uploading sitemap to the website.

Example of a Sitemap.xml generation module for Opencart CMS:

3. Site map generation with the PC software

This option is suitable for small- and medium-sized websites where the content is updated periodically.

Disadvantages of this method:

- After generating a sitemap you have to upload it to the server manually

- Most of similar PC crawler software is paid.

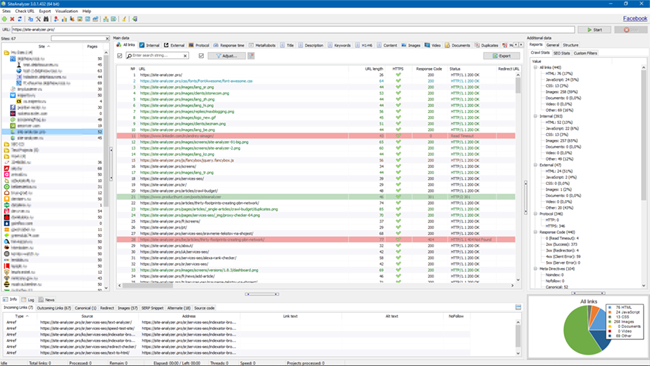

Example of generating Sitemap.xml with a free software SiteAnalyzer:

- Download the software distribution package

- After launching the software, enter the website URL and start crawling

- After crawling, select the Projects -> Generate Sitemap in the main menu

- Eventually, we get a sitemap (one file or several files if there is more than 50,000 pages)

- Upload the sitemap to the root of your website via the FTP protocol

4. Manual sitemap creation

You can create a sitemap for websites with up to 10 pages, however, to speed up the process, it is easier to use any online generator or PC software.

Summarizing all the above, we emphasize the main points to pay attention to when generating a site map:

- For resources with frequently updated content, the sitemap should be generated on the server

- Sitemap.xml should contain only relevant webpages issuing the server response code 200 and allowed for indexing

- For good resource indexing, the sitemap should be updated along with the website content updates

That is all!

Thank you for your attention and see you soon! :-)

Useful things

Sitemap correctness verification services:

- Google Webmaster: https://www.google.com/webmasters/ (Your Website -> Index -> Sitemaps -> Add a new sitemap)

Links to Sitemap description:

- Google Help: https://support.google.com/webmasters/answer/183668

- Protocol description: https://www.sitemaps.org/protocol.html

Author Andrey Simagin

Over 15 years of experience in SEO and marketing. Primary areas of expertise: development of software, tools, and web services for SEO (SiteAnalyzer, SEO Tools, SERPRiver). Website promotion services in Yandex... →

Other articles:

10,177

10,177